The previous post provided general description of two major phases that take place during import – parsing the file into memory (ReadFile() method of the Base_Reader base class) and conversion of that format-specific representation into a common format-neutral data model (Transfer()).

By default, that works as a blocking scenario, i.e. every step completes with a fully constructed internal representation in memory:

- ReadFile() completes with a fully constructed file memory object

- Transfer() completes with fully converted data stored in the internal data model (ModelData_Model).

Although perfectly fine in scenarios when your users work with smaller files this has the drawback when working with large ones, especially huge assemblies containing hundreds or thousands sub-assemblies and parts. In such scenarios a user (working with the app which integrates CAD Exchanger SDK) might not plan to use the whole assembly but only a small chunk thereof (sub-tree(s) or particular part(s)). However the above out-of-the-box scenario.

cadex::STEP_Reader aReader; //STEP reader

cadex::ModelData_Model aModel; //document

encapsulating data

if (aReader.ReadFile (theFileName) aReader.Transfer

(aModel)) {

ExploreDataContents (aModel); //do specific stuff}will force him to waste time waiting for the entire model to convert into memory and waste memory spent to hold intermediate working objects and end data which he may never need. In GUI applications this would also often mean blocking the UI until the entire model gets loaded into the scene and displayed, what may take dozens of seconds if not more.

To address that challenge and to enable developing more responsive and efficient applications on top of CAD Exchanger SDK, we implemented support of delayed conversion. In its essence it implements the proxy pattern trying to defer heavy-weight work until a first access to data. For instance, when converting a large multi-part Parasolid file (.x_t, .x_b, .xmt_bin or other filename extensions) the file will be parsed, its product structure (i.e. graph of assemblies and parts) will be converted into the data model (see ModelData_Assembly and ModelData_Part) but no real heavy-weight conversion of geometry and topology (curves and surfaces; solid, shells, faces, edges, etc) will take place. Under the cover internal ‘hooks’ will be created (we call them ‘[data] providers’) which will trigger real conversion upon first data access, for example:

ModelData_BRepRepresentation aBRep = aPart.BRepRepresentation();

const ModelData_BodyList& aBodyList = aBRep.Get(); //conversion is triggered inside when data is accessed for the first timeWe call this triggering process ‘flushing’ the providers. Once the provider is called for the first time (and flushed) any further access will be zero-time as the resulting data will be stored in the data model.

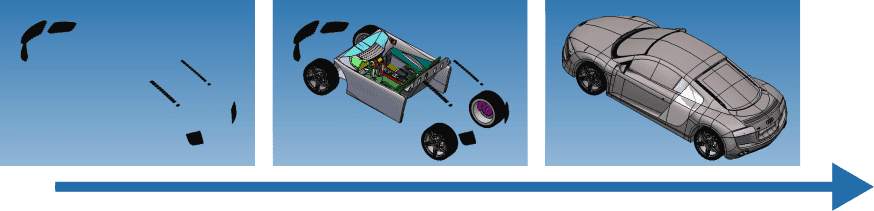

We usually try to eat our own ‘dog food’ and this mechanism is used in CAD Exchanger Lab. You probably noticed that when importing a large assembly it is often gets displayed incrementally by periodically updating the scene, for example:

Efficiency of this delayed conversion strongly depends upon capabilities of the file format itself. For instance, JT allows to implement very efficient delayed conversion by delaying even a parsing phase, so ReadFile() can be extremely fast, whereas STL does not enable any delayed conversion at all (as the STL file contents is just a plain list of lines with triangle data). However most formats (such as Parasolid, ACIS, IGES, STEP, JT, etc) do enable very reasonable efficiency enhancement especially for the Transfer() step which takes the lion share of the conversion time.

Additional efficiency comes from parallel providers’ flushing what shortens conversion time even further. Even in serial execution, each part tries to exploit parallelism but doing this at higher level (i.e. across parts) brings greater benefits.

Potential downsides

Anything in this world is often a double-edged sword, and the delayed conversion is no exception. Its efficient usage requires decent understanding of what happens underneath, otherwise you might end up with lost efficiency:

- Increased total time. Instead of executing in parallel (what is done by SDK itself in the out-of-the-box scenarios) you may end-up flushing providers sequentially, and internal (nested) parallelism might not be enough to compensate lost opportunities of the higher-level one. This can often happen if all the parts will eventually be accessed (and hence loaded and converted).

- Imbalanced application responsiveness. If parts in a file are of very distinct complexity (say one contains a wireframe body of a couple of hundreds edges whereas the other is a solid of a few thousands faces) your end-users might experience very different response times in your app. For instance, clicking a part with a (not yet loaded) wireframe body in your GUI will immediately retrieve its contents (by parsing, converting and displaying) whereas clicking the one with large solid body may take a few seconds.

To address that you might design some smarter scenarios how your app could flush providers in the background while enabling the user to work with already converted data.

General recommendations

If all of the following are true in your users’ workflows you may prefer to use the blocking out-of-the-box behavior:

- your users typically work with the entire file contents;

- the files are typically of small sizes and/or contain one or just a few parts;

- parts in the file typically have single representation (B-Rep or single polygonal representation, i.e. no LODs)

However if you anticipate that your users might need to work with only some excerpts of the file (sub-tree or selected parts), experience longer conversion times then delayed conversion could be a great leverage to help you develop more efficient and responsive apps. For more details please refer to the CAD Exchanger SDK User’s Guide.

Hope this was helpful. Thanks for reading! And drop me a comment below or just an email at info@cadexchanger.com if you have any feedback or would like to follow up.